The LLM Era Is Changing How HR Teams Analyze Feedback

We are now firmly in the era of Large Language Models (LLMs). These AI systems have rapidly become accessible to organizations, enabling teams to experiment with powerful text analysis tools.

For many Human Resources and Employee Experience leaders, this accessibility opens new possibilities for understanding employee feedback.

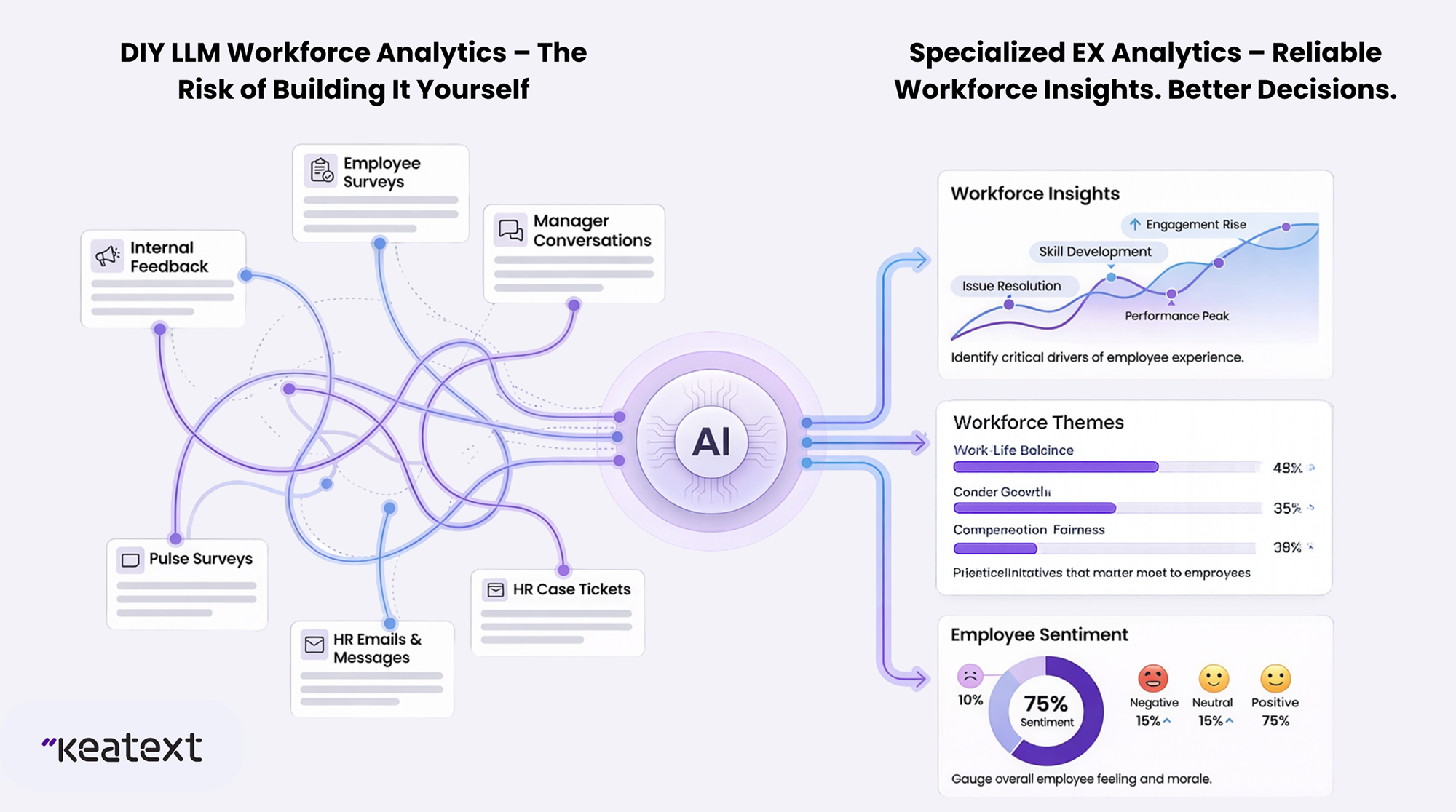

With just a few API calls, HR teams can connect surveys, engagement feedback, and internal comments to an AI model and ask it to identify sentiment, themes, or workplace concerns.

At first glance, the process seems straightforward. Feed employee feedback into a language model, prompt it to analyze sentiment, and generate insights automatically.

However, before building a DIY employee feedback analytics system, it is important to understand the technical realities behind how these models operate.

While LLMs are powerful tools, their probabilistic nature can introduce reliability challenges that HR teams should carefully consider when analyzing workforce feedback.

The Reliability Check: Is Your Workforce Insight Consistent?

The most significant impact of using LLMs is the fundamental change in how the system “thinks”. Traditional analytics relies on deterministic thinking, meaning it uses fixed math in which the same input always produces the same output.

This consistency allows teams to trust their dashboards, reports, and analytics pipelines.

Unlike systems based on fixed calculations, Large Language Models (LLMs) behave differently.

They use probabilistic thinking, meaning they operate on probabilistic patterns to produce “best guesses,” not fixed outputs.

So, instead of producing the same output every time, they estimate the most likely response based on patterns learned during training.

This probabilistic nature introduces variability.

Even when analyzing identical pieces of employee feedback, the model’s interpretation may change slightly depending on context, formatting, or surrounding inputs.

Over time, these small variations can create inconsistencies in sentiment scores, topic classification, or trend analysis.

For HR leaders relying on workforce insights to improve engagement, culture, and employee experience, such inconsistencies can lead to misleading conclusions.

The Implications of Probabilistic Interpretation

When an AI model analyzes an employee comment, it predicts the most likely interpretation based on its training data.

For example, an employee survey response like:

“Communication between departments has become difficult since the restructuring.”

might be interpreted as:

- Collaboration issue

- Leadership communication concern

- Organizational change challenge

The model selects whichever interpretation appears most probable based on the prompt and context.

However, subtle differences in wording, formatting, or surrounding feedback can influence the probability distribution used to make this prediction.

When analyzing large datasets of employee feedback, these small variations can produce fluctuating topic classifications or sentiment scores.

For HR teams monitoring engagement trends, such instability can complicate long-term workforce analysis.

The Attention Bias in AI Feedback Analysis

Another challenge arises from how language models process large volumes of text.

Employee feedback often appears in long-form formats such as open-ended survey responses, exit interviews, or internal discussion channels.

In these cases, certain biases in model attention can influence how feedback is interpreted.

Batch Contamination

When multiple employee comments about the same workplace issue appear together in a dataset, the model may begin associating unrelated feedback with that issue.

For example, during a major organizational change, many employees may comment on leadership decisions or communication challenges.

If these comments appear together in a dataset, the AI model may begin interpreting neutral comments about workload or project delays as leadership criticism.

This effect—known as batch contamination—can distort how workplace issues are categorized.

Over time, it may exaggerate the perceived scale of certain organizational challenges.

The “Lost-in-the-Middle” Effect

Language models also face challenges when analyzing long pieces of text.

Studies have shown that many models tend to focus more strongly on the beginning and end of long passages while paying less attention to the middle.

In employee feedback, important context often appears mid-response—where employees explain the root cause of their concerns.

If the model overlooks this portion of the feedback, it may generate an incomplete or incorrect interpretation of the issue.

For HR teams seeking to understand the drivers of engagement or dissatisfaction, these misinterpretations can weaken the reliability of insights.

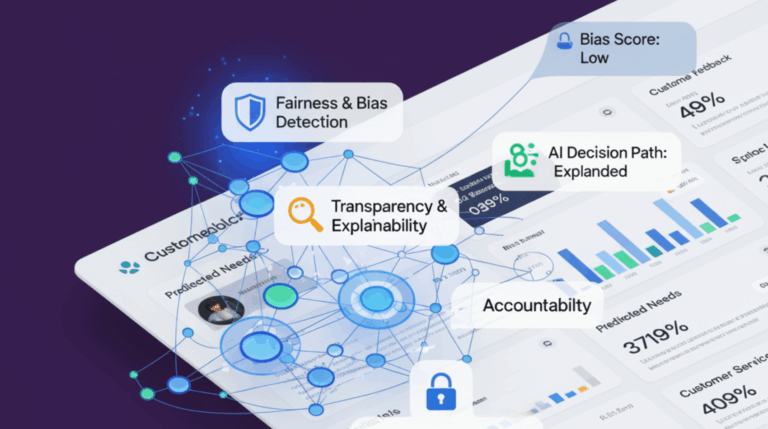

Why This Matters for Employee Experience Leaders

Employee feedback plays a critical role in shaping workplace culture and organizational strategy.

HR teams rely on feedback analytics to identify engagement challenges, understand employee concerns, and guide leadership decisions.

If sentiment analysis fluctuates or key themes are misclassified, organizations may misinterpret workforce sentiment.

This can lead to:

- Misaligned employee engagement initiatives

- Incorrect prioritization of culture improvements

- Leadership decisions based on incomplete insights

The issue is not that AI cannot analyze employee feedback effectively.

The challenge lies in using general-purpose AI models for complex workforce analytics tasks that require specialized understanding.

Choosing the Right Approach to Employee Feedback Analytics

Artificial intelligence has enormous potential to help organizations better understand their employees.

However, extracting reliable insights from employee feedback requires tools designed specifically for workforce analytics.

Specialized platforms for employee feedback analysis incorporate domain-specific models that better understand HR language, workplace context, and organizational structures.

Solutions like Keatext enable organizations to analyze employee feedback consistently and at scale.

By combining natural language processing with advanced analytics, these platforms help HR teams identify engagement drivers, cultural challenges, and improvement opportunities more accurately.

For Employee Experience leaders, the goal is not just to automate sentiment detection—but to generate trustworthy insights that support healthier organizations and stronger workplace cultures.

Final Thoughts

Artificial intelligence is transforming how organizations analyze employee feedback.

While DIY sentiment analysis tools may seem attractive due to their accessibility, hidden technical limitations can undermine the reliability of workforce insights.

When employee feedback guides strategic decisions about culture, leadership, and engagement, accuracy and consistency are essential.

By using specialized analytics solutions, organizations can ensure that AI strengthens their understanding of the workforce rather than introducing uncertainty.