The LLM Era Is Changing How CX Teams Approach Analytics

Today, it is clear that we have entered the era of Large Language Models (LLMs). These systems have rapidly become accessible to organizations of all sizes, making it easier than ever for teams to experiment with AI-powered analytics.

For many Customer Experience (CX) teams, this accessibility has created an exciting possibility: building their own sentiment analysis tools using general-purpose AI models.

With only a few prompts and an API connection, it may seem possible to transform large volumes of feedback—such as survey responses, customer reviews, and support tickets—into structured insights.

At first glance, the approach appears simple. Feed customer feedback into an AI model, ask it to classify sentiment or identify themes, and let it generate insights automatically.

However, before launching a DIY sentiment analysis project, it is important to understand how these systems actually work. While LLMs are powerful tools, they introduce reliability challenges that CX teams often underestimate.

Understanding these risks is essential when feedback insights are used to guide strategic decisions.

The Reliability Check: Is Your Compass Fixed to the North?

The most significant impact of using LLMs is the fundamental change in how the system “thinks”. Traditional analytics use deterministic thinking, meaning they use fixed math where the same input always generates the same output.

This consistency allows teams to trust their dashboards, reports, and analytics pipelines.

Unlike systems based on fixed calculations, Large Language Models (LLMs) behave differently.

They use probabilistic thinking, meaning, they operate on probabilistic patterns to produce “best guesses,” not fixed outputs.

So, instead of producing the same output every time, they estimate the most likely response based on patterns learned during training.

This probabilistic nature introduces variability.

Even when analyzing identical pieces of feedback, the model’s interpretation may change slightly depending on context, formatting, or surrounding inputs.

Over time, these small variations can create inconsistencies in sentiment scores, topic classification, or trend analysis.

For CX leaders relying on data to prioritize customer experience improvements, such inconsistencies can lead to misleading conclusions.

The Implications of Next-Word Probability

When an LLM analyzes a customer comment, it does not follow a fixed classification rule.

Instead, it predicts the most probable interpretation based on its training.

For example, a support ticket that says:

“I can’t access my account after the latest update.”

could be interpreted as:

- Product issue

- Login problem

- Technical error

The model selects whichever label appears most probable in the context of the prompt and training data.

However, subtle contextual changes can shift these probabilities.

Small variations in phrasing, tokenization, or input formatting can influence how the model categorizes the feedback.

When analyzing thousands of feedback messages, these small variations can accumulate, producing fluctuating sentiment scores or inconsistent topic labeling.

The Attention Bias in AI Feedback Analysis

Another challenge when using general-purpose AI models for sentiment analysis is how they process large volumes of text.

In any data science workflow, the quality and distribution of data strongly influence the results produced by a model. With LLMs, contextual signals within the dataset play an even greater role.

When analyzing customer feedback at scale—such as support tickets, survey responses, or chat transcripts—certain types of bias can emerge.

Batch Contamination

When large numbers of messages about a specific issue appear together in a dataset, the model may begin associating unrelated conversations with the same topic.

For example, if hundreds of tickets about a server outage appear in the same batch, the model may start interpreting neutral questions as technical complaints simply because they occur within the same context.

This phenomenon is known as batch contamination.

Over time, it can inflate the perceived severity of certain problems or distort sentiment trends.

The “Lost-in-the-Middle” Effect

Large Language Models can also struggle with long pieces of text, such as detailed support conversations.

Research has shown that many models tend to focus primarily on the beginning and end of long inputs while paying less attention to the middle.

In customer support conversations, the most important information is often located in the middle of the dialogue—where the real issue is explained.

If the model overlooks this section, it may produce a confident but incorrect interpretation of the customer’s problem.

Why This Matters for CX Specialists

Customer Experience teams rely heavily on feedback analytics to guide decision-making.

Organizations analyze customer feedback to identify pain points, prioritize improvements, and understand the drivers of satisfaction and dissatisfaction.

If sentiment classification fluctuates or key issues are misidentified, teams risk focusing on the wrong priorities.

This can lead to:

- Misguided customer experience initiatives

- Incorrect prioritization of product improvements

- Strategic decisions based on unstable insights

The problem is not that artificial intelligence is unreliable.

The real challenge is that general-purpose AI models are not always optimized for analyzing complex feedback datasets.

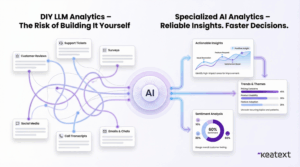

Choosing the Right Approach to AI-Driven Feedback Analysis

Artificial intelligence can transform how organizations understand their customers.

However, extracting reliable insights from unstructured customer feedback requires specialized analytics capabilities.

Platforms designed specifically for customer feedback analysis are built to address challenges such as model stability, contextual bias, and large-scale data processing.

Solutions like Keatext are designed to analyze feedback data at scale while maintaining consistency and interpretability.

These platforms combine natural language processing with domain-specific models that are optimized for understanding customer and employee feedback.

For CX leaders, the goal is not simply to automate sentiment detection. The real objective is to generate stable, trustworthy insights that support better decision-making.

Final Thoughts

Artificial intelligence is rapidly transforming how organizations analyze feedback.

DIY sentiment analysis systems may appear attractive because they are quick to prototype and easy to experiment with.

However, hidden technical challenges can quietly undermine the reliability of the insights they produce.

When feedback analytics inform strategic decisions, accuracy and consistency are essential.

Choosing the right tools ensures that AI becomes a reliable compass for CX teams—rather than a source of confusion.